Write a Python Script Named Assignment6.py That Reads the File Toycars.csv Into a Pandas Dataframe.

Spotter Now This tutorial has a related video course created by the Real Python team. Watch it together with the written tutorial to deepen your understanding: Reading and Writing Files With Pandas

Pandas is a powerful and flexible Python package that allows you to work with labeled and fourth dimension series information. It too provides statistics methods, enables plotting, and more. One crucial feature of Pandas is its ability to write and read Excel, CSV, and many other types of files. Functions like the Pandas read_csv() method enable you to piece of work with files effectively. Yous tin can use them to save the data and labels from Pandas objects to a file and load them later on as Pandas Series or DataFrame instances.

In this tutorial, you'll learn:

- What the Pandas IO tools API is

- How to read and write data to and from files

- How to piece of work with various file formats

- How to work with big data efficiently

Let's start reading and writing files!

Installing Pandas

The code in this tutorial is executed with CPython 3.7.4 and Pandas 0.25.ane. It would be beneficial to make sure you have the latest versions of Python and Pandas on your machine. You might want to create a new virtual environment and install the dependencies for this tutorial.

First, yous'll need the Pandas library. Yous may already have it installed. If y'all don't, so you can install information technology with pip:

Once the installation process completes, you should have Pandas installed and fix.

Anaconda is an fantabulous Python distribution that comes with Python, many useful packages like Pandas, and a package and environment director called Conda. To learn more about Anaconda, bank check out Setting Upwards Python for Machine Learning on Windows.

If you lot don't have Pandas in your virtual surround, then you can install it with Conda:

Conda is powerful as it manages the dependencies and their versions. To learn more than about working with Conda, you tin check out the official documentation.

Preparing Information

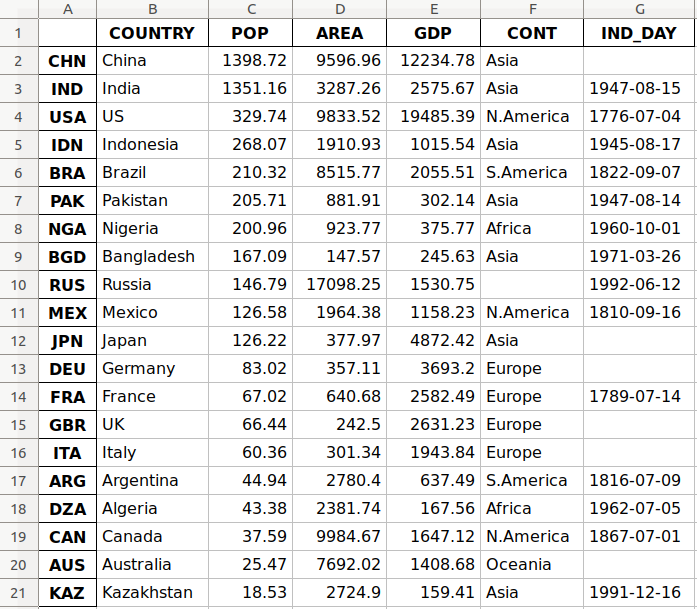

In this tutorial, you'll utilise the information related to 20 countries. Here's an overview of the data and sources y'all'll be working with:

-

Country is denoted by the land name. Each country is in the tiptop ten list for either population, area, or gross domestic product (Gross domestic product). The row labels for the dataset are the 3-letter state codes defined in ISO 3166-i. The column characterization for the dataset is

Land. -

Population is expressed in millions. The information comes from a list of countries and dependencies by population on Wikipedia. The column label for the dataset is

Pop. -

Area is expressed in thousands of kilometers squared. The information comes from a list of countries and dependencies by area on Wikipedia. The cavalcade label for the dataset is

Area. -

Gross domestic product is expressed in millions of U.S. dollars, according to the United Nations data for 2017. You can discover this data in the listing of countries by nominal GDP on Wikipedia. The cavalcade label for the dataset is

Gross domestic product. -

Continent is either Africa, Asia, Oceania, Europe, North America, or South America. You can find this data on Wikipedia as well. The column label for the dataset is

CONT. -

Independence solar day is a date that commemorates a nation's independence. The data comes from the list of national independence days on Wikipedia. The dates are shown in ISO 8601 format. The first 4 digits stand for the twelvemonth, the next two numbers are the calendar month, and the final two are for the day of the calendar month. The column label for the dataset is

IND_DAY.

This is how the data looks every bit a table:

| COUNTRY | POP | AREA | Gdp | CONT | IND_DAY | |

|---|---|---|---|---|---|---|

| CHN | China | 1398.72 | 9596.96 | 12234.78 | Asia | |

| IND | Republic of india | 1351.16 | 3287.26 | 2575.67 | Asia | 1947-08-xv |

| USA | US | 329.74 | 9833.52 | 19485.39 | North.America | 1776-07-04 |

| IDN | Indonesia | 268.07 | 1910.93 | 1015.54 | Asia | 1945-08-17 |

| BRA | Brazil | 210.32 | 8515.77 | 2055.51 | S.America | 1822-09-07 |

| PAK | Islamic republic of pakistan | 205.71 | 881.91 | 302.14 | Asia | 1947-08-fourteen |

| NGA | Nigeria | 200.96 | 923.77 | 375.77 | Africa | 1960-ten-01 |

| BGD | People's republic of bangladesh | 167.09 | 147.57 | 245.63 | Asia | 1971-03-26 |

| RUS | Russia | 146.79 | 17098.25 | 1530.75 | 1992-06-12 | |

| MEX | Mexico | 126.58 | 1964.38 | 1158.23 | Due north.America | 1810-09-16 |

| JPN | Japan | 126.22 | 377.97 | 4872.42 | Asia | |

| DEU | Frg | 83.02 | 357.11 | 3693.twenty | Europe | |

| FRA | France | 67.02 | 640.68 | 2582.49 | Europe | 1789-07-14 |

| GBR | UK | 66.44 | 242.50 | 2631.23 | Europe | |

| ITA | Italian republic | threescore.36 | 301.34 | 1943.84 | Europe | |

| ARG | Argentina | 44.94 | 2780.40 | 637.49 | Southward.America | 1816-07-09 |

| DZA | People's democratic republic of algeria | 43.38 | 2381.74 | 167.56 | Africa | 1962-07-05 |

| Can | Canada | 37.59 | 9984.67 | 1647.12 | N.America | 1867-07-01 |

| AUS | Commonwealth of australia | 25.47 | 7692.02 | 1408.68 | Oceania | |

| KAZ | Kazakhstan | eighteen.53 | 2724.90 | 159.41 | Asia | 1991-12-xvi |

Y'all may detect that some of the data is missing. For case, the continent for Russia is not specified because it spreads beyond both Europe and Asia. There are likewise several missing independence days considering the data source omits them.

You tin organize this information in Python using a nested dictionary:

information = { 'CHN' : { 'Land' : 'China' , 'Popular' : 1_398.72 , 'AREA' : 9_596.96 , 'GDP' : 12_234.78 , 'CONT' : 'Asia' }, 'IND' : { 'COUNTRY' : 'India' , 'POP' : 1_351.16 , 'AREA' : 3_287.26 , 'GDP' : 2_575.67 , 'CONT' : 'Asia' , 'IND_DAY' : '1947-08-15' }, 'The states' : { 'COUNTRY' : 'US' , 'Popular' : 329.74 , 'AREA' : 9_833.52 , 'GDP' : 19_485.39 , 'CONT' : 'Due north.America' , 'IND_DAY' : '1776-07-04' }, 'IDN' : { 'COUNTRY' : 'Indonesia' , 'POP' : 268.07 , 'AREA' : 1_910.93 , 'Gdp' : 1_015.54 , 'CONT' : 'Asia' , 'IND_DAY' : '1945-08-17' }, 'BRA' : { 'State' : 'Brazil' , 'POP' : 210.32 , 'AREA' : 8_515.77 , 'GDP' : 2_055.51 , 'CONT' : 'Due south.America' , 'IND_DAY' : '1822-09-07' }, 'PAK' : { 'COUNTRY' : 'Pakistan' , 'Pop' : 205.71 , 'AREA' : 881.91 , 'GDP' : 302.14 , 'CONT' : 'Asia' , 'IND_DAY' : '1947-08-14' }, 'NGA' : { 'COUNTRY' : 'Nigeria' , 'Popular' : 200.96 , 'Surface area' : 923.77 , 'Gdp' : 375.77 , 'CONT' : 'Africa' , 'IND_DAY' : '1960-10-01' }, 'BGD' : { 'State' : 'Bangladesh' , 'Popular' : 167.09 , 'AREA' : 147.57 , 'Gross domestic product' : 245.63 , 'CONT' : 'Asia' , 'IND_DAY' : '1971-03-26' }, 'RUS' : { 'COUNTRY' : 'Russian federation' , 'POP' : 146.79 , 'Surface area' : 17_098.25 , 'Gdp' : 1_530.75 , 'IND_DAY' : '1992-06-12' }, 'MEX' : { 'Country' : 'Mexico' , 'POP' : 126.58 , 'AREA' : 1_964.38 , 'GDP' : 1_158.23 , 'CONT' : 'Due north.America' , 'IND_DAY' : '1810-09-16' }, 'JPN' : { 'COUNTRY' : 'Japan' , 'POP' : 126.22 , 'AREA' : 377.97 , 'Gdp' : 4_872.42 , 'CONT' : 'Asia' }, 'DEU' : { 'State' : 'Germany' , 'Popular' : 83.02 , 'AREA' : 357.11 , 'Gross domestic product' : 3_693.20 , 'CONT' : 'Europe' }, 'FRA' : { 'State' : 'France' , 'Popular' : 67.02 , 'Surface area' : 640.68 , 'GDP' : 2_582.49 , 'CONT' : 'Europe' , 'IND_DAY' : '1789-07-14' }, 'GBR' : { 'COUNTRY' : 'United kingdom of great britain and northern ireland' , 'POP' : 66.44 , 'Area' : 242.50 , 'Gdp' : 2_631.23 , 'CONT' : 'Europe' }, 'ITA' : { 'Country' : 'Italy' , 'POP' : 60.36 , 'Expanse' : 301.34 , 'GDP' : 1_943.84 , 'CONT' : 'Europe' }, 'ARG' : { 'State' : 'Argentina' , 'Pop' : 44.94 , 'Expanse' : 2_780.xl , 'GDP' : 637.49 , 'CONT' : 'S.America' , 'IND_DAY' : '1816-07-09' }, 'DZA' : { 'State' : 'Algeria' , 'POP' : 43.38 , 'Surface area' : 2_381.74 , 'Gdp' : 167.56 , 'CONT' : 'Africa' , 'IND_DAY' : '1962-07-05' }, 'Tin' : { 'Land' : 'Canada' , 'POP' : 37.59 , 'AREA' : 9_984.67 , 'Gdp' : 1_647.12 , 'CONT' : 'Due north.America' , 'IND_DAY' : '1867-07-01' }, 'AUS' : { 'COUNTRY' : 'Commonwealth of australia' , 'POP' : 25.47 , 'AREA' : 7_692.02 , 'GDP' : 1_408.68 , 'CONT' : 'Oceania' }, 'KAZ' : { 'COUNTRY' : 'Kazakhstan' , 'POP' : eighteen.53 , 'Expanse' : 2_724.90 , 'Gdp' : 159.41 , 'CONT' : 'Asia' , 'IND_DAY' : '1991-12-sixteen' } } columns = ( 'COUNTRY' , 'POP' , 'AREA' , 'Gross domestic product' , 'CONT' , 'IND_DAY' ) Each row of the table is written as an inner dictionary whose keys are the column names and values are the corresponding data. These dictionaries are then collected as the values in the outer data dictionary. The corresponding keys for data are the three-alphabetic character state codes.

Yous can use this data to create an example of a Pandas DataFrame. First, you demand to import Pandas:

>>>

>>> import pandas as pd At present that y'all accept Pandas imported, you can use the DataFrame constructor and information to create a DataFrame object.

data is organized in such a way that the country codes correspond to columns. You can contrary the rows and columns of a DataFrame with the property .T:

>>>

>>> df = pd . DataFrame ( data = data ) . T >>> df COUNTRY Pop AREA GDP CONT IND_DAY CHN China 1398.72 9596.96 12234.8 Asia NaN IND India 1351.16 3287.26 2575.67 Asia 1947-08-fifteen Us US 329.74 9833.52 19485.4 N.America 1776-07-04 IDN Indonesia 268.07 1910.93 1015.54 Asia 1945-08-17 BRA Brazil 210.32 8515.77 2055.51 S.America 1822-09-07 PAK Pakistan 205.71 881.91 302.xiv Asia 1947-08-fourteen NGA Nigeria 200.96 923.77 375.77 Africa 1960-x-01 BGD Bangladesh 167.09 147.57 245.63 Asia 1971-03-26 RUS Russia 146.79 17098.ii 1530.75 NaN 1992-06-12 MEX Mexico 126.58 1964.38 1158.23 Northward.America 1810-09-16 JPN Japan 126.22 377.97 4872.42 Asia NaN DEU Germany 83.02 357.11 3693.2 Europe NaN FRA France 67.02 640.68 2582.49 Europe 1789-07-14 GBR United kingdom of great britain and northern ireland 66.44 242.5 2631.23 Europe NaN ITA Italian republic lx.36 301.34 1943.84 Europe NaN ARG Argentina 44.94 2780.4 637.49 S.America 1816-07-09 DZA People's democratic republic of algeria 43.38 2381.74 167.56 Africa 1962-07-05 Can Canada 37.59 9984.67 1647.12 North.America 1867-07-01 AUS Australia 25.47 7692.02 1408.68 Oceania NaN KAZ Kazakhstan 18.53 2724.ix 159.41 Asia 1991-12-16 Now you take your DataFrame object populated with the data about each land.

Versions of Python older than three.6 did not guarantee the order of keys in dictionaries. To ensure the social club of columns is maintained for older versions of Python and Pandas, you can specify index=columns:

>>>

>>> df = pd . DataFrame ( data = data , index = columns ) . T Now that y'all've prepared your data, you're prepare to start working with files!

Using the Pandas read_csv() and .to_csv() Functions

A comma-separated values (CSV) file is a plaintext file with a .csv extension that holds tabular data. This is one of the near popular file formats for storing large amounts of data. Each row of the CSV file represents a single table row. The values in the same row are by default separated with commas, but you lot could change the separator to a semicolon, tab, space, or some other character.

Write a CSV File

You tin can salvage your Pandas DataFrame as a CSV file with .to_csv():

>>>

>>> df . to_csv ( 'data.csv' ) That'due south it! You've created the file information.csv in your current working directory. You can expand the code block below to run across how your CSV file should look:

,Country,Pop,Area,GDP,CONT,IND_DAY CHN,China,1398.72,9596.96,12234.78,Asia, IND,Bharat,1351.16,3287.26,2575.67,Asia,1947-08-15 USA,US,329.74,9833.52,19485.39,N.America,1776-07-04 IDN,Indonesia,268.07,1910.93,1015.54,Asia,1945-08-17 BRA,Brazil,210.32,8515.77,2055.51,S.America,1822-09-07 PAK,Pakistan,205.71,881.91,302.14,Asia,1947-08-xiv NGA,Nigeria,200.96,923.77,375.77,Africa,1960-10-01 BGD,Bangladesh,167.09,147.57,245.63,Asia,1971-03-26 RUS,Russian federation,146.79,17098.25,1530.75,,1992-06-12 MEX,Mexico,126.58,1964.38,1158.23,N.America,1810-09-16 JPN,Nippon,126.22,377.97,4872.42,Asia, DEU,Frg,83.02,357.11,3693.two,Europe, FRA,French republic,67.02,640.68,2582.49,Europe,1789-07-fourteen GBR,United kingdom of great britain and northern ireland,66.44,242.v,2631.23,Europe, ITA,Italy,60.36,301.34,1943.84,Europe, ARG,Argentina,44.94,2780.iv,637.49,S.America,1816-07-09 DZA,Algeria,43.38,2381.74,167.56,Africa,1962-07-05 CAN,Canada,37.59,9984.67,1647.12,Due north.America,1867-07-01 AUS,Australia,25.47,7692.02,1408.68,Oceania, KAZ,Kazakhstan,xviii.53,2724.nine,159.41,Asia,1991-12-xvi This text file contains the data separated with commas. The first column contains the row labels. In some cases, yous'll notice them irrelevant. If you lot don't desire to keep them, then you tin laissez passer the statement index=False to .to_csv().

Read a CSV File

One time your data is saved in a CSV file, you'll likely want to load and employ it from time to fourth dimension. Y'all can practise that with the Pandas read_csv() function:

>>>

>>> df = pd . read_csv ( 'information.csv' , index_col = 0 ) >>> df Land Pop AREA GDP CONT IND_DAY CHN China 1398.72 9596.96 12234.78 Asia NaN IND India 1351.xvi 3287.26 2575.67 Asia 1947-08-15 United states of america United states of america 329.74 9833.52 19485.39 N.America 1776-07-04 IDN Indonesia 268.07 1910.93 1015.54 Asia 1945-08-17 BRA Brazil 210.32 8515.77 2055.51 S.America 1822-09-07 PAK Pakistan 205.71 881.91 302.fourteen Asia 1947-08-xiv NGA Nigeria 200.96 923.77 375.77 Africa 1960-10-01 BGD Bangladesh 167.09 147.57 245.63 Asia 1971-03-26 RUS Russian federation 146.79 17098.25 1530.75 NaN 1992-06-12 MEX Mexico 126.58 1964.38 1158.23 N.America 1810-09-16 JPN Japan 126.22 377.97 4872.42 Asia NaN DEU Germany 83.02 357.xi 3693.20 Europe NaN FRA French republic 67.02 640.68 2582.49 Europe 1789-07-14 GBR UK 66.44 242.50 2631.23 Europe NaN ITA Italian republic lx.36 301.34 1943.84 Europe NaN ARG Argentina 44.94 2780.40 637.49 Due south.America 1816-07-09 DZA Algeria 43.38 2381.74 167.56 Africa 1962-07-05 Tin can Canada 37.59 9984.67 1647.12 Due north.America 1867-07-01 AUS Australia 25.47 7692.02 1408.68 Oceania NaN KAZ Kazakhstan 18.53 2724.ninety 159.41 Asia 1991-12-16 In this example, the Pandas read_csv() role returns a new DataFrame with the data and labels from the file information.csv, which you lot specified with the offset statement. This string can be any valid path, including URLs.

The parameter index_col specifies the column from the CSV file that contains the row labels. You assign a zero-based column index to this parameter. Y'all should make up one's mind the value of index_col when the CSV file contains the row labels to avoid loading them as data.

You lot'll larn more than virtually using Pandas with CSV files later on in this tutorial. You tin can also check out Reading and Writing CSV Files in Python to run across how to handle CSV files with the born Python library csv equally well.

Using Pandas to Write and Read Excel Files

Microsoft Excel is probably the most widely-used spreadsheet software. While older versions used binary .xls files, Excel 2007 introduced the new XML-based .xlsx file. You tin read and write Excel files in Pandas, similar to CSV files. However, you'll need to install the following Python packages get-go:

- xlwt to write to

.xlsfiles - openpyxl or XlsxWriter to write to

.xlsxfiles - xlrd to read Excel files

You can install them using pip with a single command:

$ pip install xlwt openpyxl xlsxwriter xlrd You tin can also apply Conda:

$ conda install xlwt openpyxl xlsxwriter xlrd Please notation that yous don't take to install all these packages. For case, you lot don't demand both openpyxl and XlsxWriter. If you're going to work just with .xls files, and so y'all don't need any of them! However, if you intend to work only with .xlsx files, and so you're going to need at least i of them, but not xlwt. Take some time to decide which packages are right for your projection.

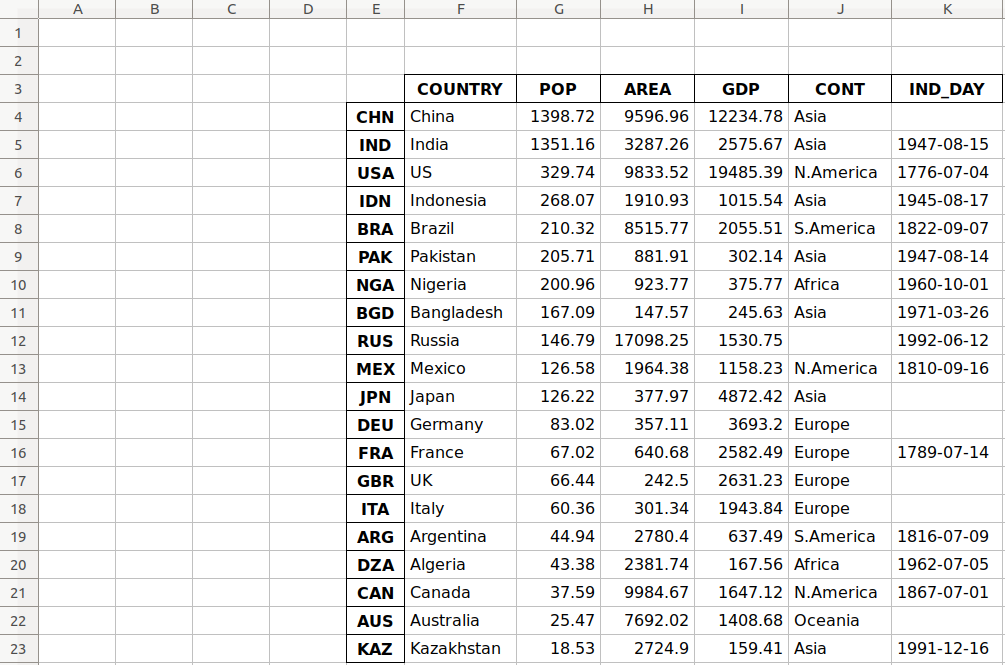

Write an Excel File

Once yous have those packages installed, yous tin can save your DataFrame in an Excel file with .to_excel():

>>>

>>> df . to_excel ( 'data.xlsx' ) The argument 'information.xlsx' represents the target file and, optionally, its path. The higher up statement should create the file data.xlsx in your electric current working directory. That file should expect like this:

The first cavalcade of the file contains the labels of the rows, while the other columns store data.

Read an Excel File

You can load data from Excel files with read_excel():

>>>

>>> df = pd . read_excel ( 'data.xlsx' , index_col = 0 ) >>> df Country Pop Surface area Gross domestic product CONT IND_DAY CHN Prc 1398.72 9596.96 12234.78 Asia NaN IND Republic of india 1351.16 3287.26 2575.67 Asia 1947-08-xv USA US 329.74 9833.52 19485.39 N.America 1776-07-04 IDN Indonesia 268.07 1910.93 1015.54 Asia 1945-08-17 BRA Brazil 210.32 8515.77 2055.51 S.America 1822-09-07 PAK Pakistan 205.71 881.91 302.fourteen Asia 1947-08-xiv NGA Nigeria 200.96 923.77 375.77 Africa 1960-10-01 BGD Bangladesh 167.09 147.57 245.63 Asia 1971-03-26 RUS Russia 146.79 17098.25 1530.75 NaN 1992-06-12 MEX Mexico 126.58 1964.38 1158.23 Due north.America 1810-09-16 JPN Japan 126.22 377.97 4872.42 Asia NaN DEU Deutschland 83.02 357.11 3693.twenty Europe NaN FRA France 67.02 640.68 2582.49 Europe 1789-07-xiv GBR Uk 66.44 242.50 2631.23 Europe NaN ITA Italy 60.36 301.34 1943.84 Europe NaN ARG Argentine republic 44.94 2780.twoscore 637.49 S.America 1816-07-09 DZA People's democratic republic of algeria 43.38 2381.74 167.56 Africa 1962-07-05 Tin can Canada 37.59 9984.67 1647.12 Northward.America 1867-07-01 AUS Australia 25.47 7692.02 1408.68 Oceania NaN KAZ Kazakhstan eighteen.53 2724.ninety 159.41 Asia 1991-12-16 read_excel() returns a new DataFrame that contains the values from information.xlsx. Yous tin can also use read_excel() with OpenDocument spreadsheets, or .ods files.

You'll learn more virtually working with Excel files later on in this tutorial. You tin as well check out Using Pandas to Read Big Excel Files in Python.

Understanding the Pandas IO API

Pandas IO Tools is the API that allows yous to save the contents of Series and DataFrame objects to the clipboard, objects, or files of various types. It besides enables loading data from the clipboard, objects, or files.

Write Files

Series and DataFrame objects have methods that enable writing data and labels to the clipboard or files. They're named with the pattern .to_<file-type>() , where <file-type> is the type of the target file.

You've learned almost .to_csv() and .to_excel(), simply there are others, including:

-

.to_json() -

.to_html() -

.to_sql() -

.to_pickle()

At that place are notwithstanding more than file types that you can write to, and then this list is not exhaustive.

These methods have parameters specifying the target file path where y'all saved the data and labels. This is mandatory in some cases and optional in others. If this option is available and you choose to omit it, then the methods return the objects (like strings or iterables) with the contents of DataFrame instances.

The optional parameter pinch decides how to compress the file with the data and labels. You'll larn more than well-nigh it after on. There are a few other parameters, but they're mostly specific to i or several methods. You won't go into them in item here.

Read Files

Pandas functions for reading the contents of files are named using the pattern .read_<file-blazon>() , where <file-type> indicates the blazon of the file to read. You've already seen the Pandas read_csv() and read_excel() functions. Here are a few others:

-

read_json() -

read_html() -

read_sql() -

read_pickle()

These functions accept a parameter that specifies the target file path. It can be whatever valid cord that represents the path, either on a local auto or in a URL. Other objects are also acceptable depending on the file type.

The optional parameter compression determines the blazon of decompression to apply for the compressed files. You lot'll learn about information technology subsequently in this tutorial. There are other parameters, but they're specific to ane or several functions. You lot won't become into them in detail here.

Working With Unlike File Types

The Pandas library offers a broad range of possibilities for saving your data to files and loading data from files. In this section, you'll learn more most working with CSV and Excel files. You'll likewise see how to utilise other types of files, similar JSON, web pages, databases, and Python pickle files.

CSV Files

You've already learned how to read and write CSV files. Now permit's dig a little deeper into the details. When yous employ .to_csv() to save your DataFrame, you can provide an argument for the parameter path_or_buf to specify the path, name, and extension of the target file.

path_or_buf is the get-go argument .to_csv() volition get. It can be any string that represents a valid file path that includes the file proper name and its extension. You've seen this in a previous example. Nonetheless, if you omit path_or_buf, so .to_csv() won't create any files. Instead, information technology'll return the corresponding string:

>>>

>>> df = pd . DataFrame ( data = data ) . T >>> s = df . to_csv () >>> print ( s ) ,COUNTRY,Popular,AREA,GDP,CONT,IND_DAY CHN,Cathay,1398.72,9596.96,12234.78,Asia, IND,India,1351.sixteen,3287.26,2575.67,Asia,1947-08-fifteen USA,United states of america,329.74,9833.52,19485.39,N.America,1776-07-04 IDN,Indonesia,268.07,1910.93,1015.54,Asia,1945-08-17 BRA,Brazil,210.32,8515.77,2055.51,South.America,1822-09-07 PAK,Islamic republic of pakistan,205.71,881.91,302.xiv,Asia,1947-08-14 NGA,Nigeria,200.96,923.77,375.77,Africa,1960-10-01 BGD,Bangladesh,167.09,147.57,245.63,Asia,1971-03-26 RUS,Russia,146.79,17098.25,1530.75,,1992-06-12 MEX,United mexican states,126.58,1964.38,1158.23,North.America,1810-09-16 JPN,Nihon,126.22,377.97,4872.42,Asia, DEU,Germany,83.02,357.11,3693.ii,Europe, FRA,France,67.02,640.68,2582.49,Europe,1789-07-14 GBR,UK,66.44,242.v,2631.23,Europe, ITA,Italia,threescore.36,301.34,1943.84,Europe, ARG,Argentine republic,44.94,2780.4,637.49,South.America,1816-07-09 DZA,Algeria,43.38,2381.74,167.56,Africa,1962-07-05 CAN,Canada,37.59,9984.67,1647.12,N.America,1867-07-01 AUS,Australia,25.47,7692.02,1408.68,Oceania, KAZ,Kazakhstan,18.53,2724.9,159.41,Asia,1991-12-16 At present you take the cord south instead of a CSV file. Yous likewise have some missing values in your DataFrame object. For example, the continent for Russia and the independence days for several countries (Mainland china, Japan, and then on) are not available. In data science and machine learning, you must handle missing values carefully. Pandas excels here! By default, Pandas uses the NaN value to supercede the missing values.

The continent that corresponds to Russia in df is nan:

>>>

>>> df . loc [ 'RUS' , 'CONT' ] nan This example uses .loc[] to get data with the specified row and column names.

When you save your DataFrame to a CSV file, empty strings ('') will represent the missing data. You lot tin come across this both in your file data.csv and in the string s. If you want to change this behavior, then utilize the optional parameter na_rep:

>>>

>>> df . to_csv ( 'new-data.csv' , na_rep = '(missing)' ) This lawmaking produces the file new-information.csv where the missing values are no longer empty strings. Yous tin can expand the code block below to come across how this file should await:

,State,POP,AREA,Gdp,CONT,IND_DAY CHN,China,1398.72,9596.96,12234.78,Asia,(missing) IND,India,1351.sixteen,3287.26,2575.67,Asia,1947-08-15 USA,The states,329.74,9833.52,19485.39,N.America,1776-07-04 IDN,Republic of indonesia,268.07,1910.93,1015.54,Asia,1945-08-17 BRA,Brazil,210.32,8515.77,2055.51,S.America,1822-09-07 PAK,Pakistan,205.71,881.91,302.14,Asia,1947-08-14 NGA,Nigeria,200.96,923.77,375.77,Africa,1960-10-01 BGD,Bangladesh,167.09,147.57,245.63,Asia,1971-03-26 RUS,Russia,146.79,17098.25,1530.75,(missing),1992-06-12 MEX,Mexico,126.58,1964.38,1158.23,N.America,1810-09-16 JPN,Nihon,126.22,377.97,4872.42,Asia,(missing) DEU,Germany,83.02,357.11,3693.ii,Europe,(missing) FRA,France,67.02,640.68,2582.49,Europe,1789-07-14 GBR,Uk,66.44,242.5,2631.23,Europe,(missing) ITA,Italy,lx.36,301.34,1943.84,Europe,(missing) ARG,Argentine republic,44.94,2780.4,637.49,S.America,1816-07-09 DZA,People's democratic republic of algeria,43.38,2381.74,167.56,Africa,1962-07-05 Tin can,Canada,37.59,9984.67,1647.12,N.America,1867-07-01 AUS,Australia,25.47,7692.02,1408.68,Oceania,(missing) KAZ,Kazakhstan,18.53,2724.9,159.41,Asia,1991-12-16 Now, the string '(missing)' in the file corresponds to the nan values from df.

When Pandas reads files, information technology considers the empty string ('') and a few others as missing values by default:

-

'nan' -

'-nan' -

'NA' -

'N/A' -

'NaN' -

'null'

If you lot don't desire this behavior, and then you lot can pass keep_default_na=False to the Pandas read_csv() function. To specify other labels for missing values, use the parameter na_values:

>>>

>>> pd . read_csv ( 'new-information.csv' , index_col = 0 , na_values = '(missing)' ) COUNTRY POP AREA GDP CONT IND_DAY CHN China 1398.72 9596.96 12234.78 Asia NaN IND India 1351.xvi 3287.26 2575.67 Asia 1947-08-15 United states of america The states 329.74 9833.52 19485.39 Northward.America 1776-07-04 IDN Indonesia 268.07 1910.93 1015.54 Asia 1945-08-17 BRA Brazil 210.32 8515.77 2055.51 Due south.America 1822-09-07 PAK Islamic republic of pakistan 205.71 881.91 302.14 Asia 1947-08-14 NGA Nigeria 200.96 923.77 375.77 Africa 1960-x-01 BGD Bangladesh 167.09 147.57 245.63 Asia 1971-03-26 RUS Russia 146.79 17098.25 1530.75 NaN 1992-06-12 MEX Mexico 126.58 1964.38 1158.23 North.America 1810-09-16 JPN Nihon 126.22 377.97 4872.42 Asia NaN DEU Germany 83.02 357.eleven 3693.20 Europe NaN FRA France 67.02 640.68 2582.49 Europe 1789-07-fourteen GBR Uk 66.44 242.50 2631.23 Europe NaN ITA Italian republic 60.36 301.34 1943.84 Europe NaN ARG Argentina 44.94 2780.twoscore 637.49 South.America 1816-07-09 DZA People's democratic republic of algeria 43.38 2381.74 167.56 Africa 1962-07-05 CAN Canada 37.59 9984.67 1647.12 N.America 1867-07-01 AUS Australia 25.47 7692.02 1408.68 Oceania NaN KAZ Kazakhstan 18.53 2724.90 159.41 Asia 1991-12-sixteen Here, you've marked the string '(missing)' as a new missing information label, and Pandas replaced it with nan when information technology read the file.

When you lot load information from a file, Pandas assigns the data types to the values of each cavalcade by default. Yous can cheque these types with .dtypes:

>>>

>>> df = pd . read_csv ( 'information.csv' , index_col = 0 ) >>> df . dtypes State object Pop float64 Expanse float64 Gross domestic product float64 CONT object IND_DAY object dtype: object The columns with strings and dates ('COUNTRY', 'CONT', and 'IND_DAY') accept the information type object. Meanwhile, the numeric columns comprise 64-scrap floating-point numbers (float64).

You can apply the parameter dtype to specify the desired data types and parse_dates to force utilise of datetimes:

>>>

>>> dtypes = { 'POP' : 'float32' , 'Expanse' : 'float32' , 'GDP' : 'float32' } >>> df = pd . read_csv ( 'information.csv' , index_col = 0 , dtype = dtypes , ... parse_dates = [ 'IND_DAY' ]) >>> df . dtypes Land object Popular float32 Expanse float32 Gdp float32 CONT object IND_DAY datetime64[ns] dtype: object >>> df [ 'IND_DAY' ] CHN NaT IND 1947-08-15 USA 1776-07-04 IDN 1945-08-17 BRA 1822-09-07 PAK 1947-08-14 NGA 1960-10-01 BGD 1971-03-26 RUS 1992-06-12 MEX 1810-09-16 JPN NaT DEU NaT FRA 1789-07-14 GBR NaT ITA NaT ARG 1816-07-09 DZA 1962-07-05 CAN 1867-07-01 AUS NaT KAZ 1991-12-16 Name: IND_DAY, dtype: datetime64[ns] Now, you have 32-bit floating-point numbers (float32) as specified with dtype. These differ slightly from the original 64-fleck numbers because of smaller precision. The values in the last column are considered as dates and have the data blazon datetime64. That'south why the NaN values in this column are replaced with NaT.

Now that yous have real dates, y'all can save them in the format you lot like:

>>>

>>> df = pd . read_csv ( 'data.csv' , index_col = 0 , parse_dates = [ 'IND_DAY' ]) >>> df . to_csv ( 'formatted-data.csv' , date_format = '%B %d , %Y' ) Hither, you've specified the parameter date_format to be '%B %d, %Y'. You tin can expand the lawmaking block below to see the resulting file:

,Country,POP,AREA,GDP,CONT,IND_DAY CHN,China,1398.72,9596.96,12234.78,Asia, IND,India,1351.16,3287.26,2575.67,Asia,"August 15, 1947" U.s.,U.s.a.,329.74,9833.52,19485.39,North.America,"July 04, 1776" IDN,Indonesia,268.07,1910.93,1015.54,Asia,"August 17, 1945" BRA,Brazil,210.32,8515.77,2055.51,S.America,"September 07, 1822" PAK,Pakistan,205.71,881.91,302.14,Asia,"August 14, 1947" NGA,Nigeria,200.96,923.77,375.77,Africa,"October 01, 1960" BGD,Bangladesh,167.09,147.57,245.63,Asia,"March 26, 1971" RUS,Russia,146.79,17098.25,1530.75,,"June 12, 1992" MEX,Mexico,126.58,1964.38,1158.23,Due north.America,"September 16, 1810" JPN,Nippon,126.22,377.97,4872.42,Asia, DEU,Federal republic of germany,83.02,357.11,3693.2,Europe, FRA,France,67.02,640.68,2582.49,Europe,"July 14, 1789" GBR,Great britain,66.44,242.five,2631.23,Europe, ITA,Italy,60.36,301.34,1943.84,Europe, ARG,Argentina,44.94,2780.4,637.49,S.America,"July 09, 1816" DZA,People's democratic republic of algeria,43.38,2381.74,167.56,Africa,"July 05, 1962" CAN,Canada,37.59,9984.67,1647.12,N.America,"July 01, 1867" AUS,Australia,25.47,7692.02,1408.68,Oceania, KAZ,Republic of kazakhstan,eighteen.53,2724.9,159.41,Asia,"December xvi, 1991" The format of the dates is different at present. The format '%B %d, %Y' means the engagement will get-go display the full name of the month, then the day followed by a comma, and finally the full year.

There are several other optional parameters that you can apply with .to_csv():

-

sepdenotes a values separator. -

decimalindicates a decimal separator. -

encodingsets the file encoding. -

headerspecifies whether you desire to write cavalcade labels in the file.

Here'due south how y'all would pass arguments for sep and header:

>>>

>>> s = df . to_csv ( sep = ';' , header = Simulated ) >>> print ( s ) CHN;People's republic of china;1398.72;9596.96;12234.78;Asia; IND;India;1351.16;3287.26;2575.67;Asia;1947-08-15 USA;Us;329.74;9833.52;19485.39;N.America;1776-07-04 IDN;Indonesia;268.07;1910.93;1015.54;Asia;1945-08-17 BRA;Brazil;210.32;8515.77;2055.51;S.America;1822-09-07 PAK;Pakistan;205.71;881.91;302.14;Asia;1947-08-14 NGA;Nigeria;200.96;923.77;375.77;Africa;1960-10-01 BGD;People's republic of bangladesh;167.09;147.57;245.63;Asia;1971-03-26 RUS;Russia;146.79;17098.25;1530.75;;1992-06-12 MEX;Mexico;126.58;1964.38;1158.23;N.America;1810-09-sixteen JPN;Japan;126.22;377.97;4872.42;Asia; DEU;Germany;83.02;357.eleven;3693.2;Europe; FRA;France;67.02;640.68;2582.49;Europe;1789-07-14 GBR;Uk;66.44;242.5;2631.23;Europe; ITA;Italy;threescore.36;301.34;1943.84;Europe; ARG;Argentine republic;44.94;2780.iv;637.49;S.America;1816-07-09 DZA;Algeria;43.38;2381.74;167.56;Africa;1962-07-05 CAN;Canada;37.59;9984.67;1647.12;N.America;1867-07-01 AUS;Commonwealth of australia;25.47;7692.02;1408.68;Oceania; KAZ;Kazakhstan;18.53;2724.9;159.41;Asia;1991-12-16 The information is separated with a semicolon (';') because you've specified sep=';'. Also, since you passed header=Faux, y'all meet your data without the header row of column names.

The Pandas read_csv() office has many additional options for managing missing information, working with dates and times, quoting, encoding, handling errors, and more than. For case, if you have a file with one data column and desire to go a Series object instead of a DataFrame, then you tin pass squeeze=True to read_csv(). Yous'll learn later on about data pinch and decompression, equally well equally how to skip rows and columns.

JSON Files

JSON stands for JavaScript object notation. JSON files are plaintext files used for information interchange, and humans tin can read them easily. They follow the ISO/IEC 21778:2017 and ECMA-404 standards and utilize the .json extension. Python and Pandas piece of work well with JSON files, as Python'southward json library offers built-in support for them.

You can save the data from your DataFrame to a JSON file with .to_json(). Start by creating a DataFrame object once again. Use the dictionary data that holds the data about countries then apply .to_json():

>>>

>>> df = pd . DataFrame ( data = data ) . T >>> df . to_json ( 'data-columns.json' ) This code produces the file data-columns.json. You can aggrandize the lawmaking block below to see how this file should expect:

{ "COUNTRY" :{ "CHN" : "China" , "IND" : "India" , "The states" : "US" , "IDN" : "Indonesia" , "BRA" : "Brazil" , "PAK" : "Islamic republic of pakistan" , "NGA" : "Nigeria" , "BGD" : "People's republic of bangladesh" , "RUS" : "Russia" , "MEX" : "Mexico" , "JPN" : "Japan" , "DEU" : "Germany" , "FRA" : "France" , "GBR" : "Britain" , "ITA" : "Italy" , "ARG" : "Argentine republic" , "DZA" : "Algeria" , "Can" : "Canada" , "AUS" : "Australia" , "KAZ" : "Republic of kazakhstan" }, "POP" :{ "CHN" : 1398.72 , "IND" : 1351.sixteen , "USA" : 329.74 , "IDN" : 268.07 , "BRA" : 210.32 , "PAK" : 205.71 , "NGA" : 200.96 , "BGD" : 167.09 , "RUS" : 146.79 , "MEX" : 126.58 , "JPN" : 126.22 , "DEU" : 83.02 , "FRA" : 67.02 , "GBR" : 66.44 , "ITA" : lx.36 , "ARG" : 44.94 , "DZA" : 43.38 , "Tin" : 37.59 , "AUS" : 25.47 , "KAZ" : xviii.53 }, "Expanse" :{ "CHN" : 9596.96 , "IND" : 3287.26 , "The states" : 9833.52 , "IDN" : 1910.93 , "BRA" : 8515.77 , "PAK" : 881.91 , "NGA" : 923.77 , "BGD" : 147.57 , "RUS" : 17098.25 , "MEX" : 1964.38 , "JPN" : 377.97 , "DEU" : 357.11 , "FRA" : 640.68 , "GBR" : 242.5 , "ITA" : 301.34 , "ARG" : 2780.4 , "DZA" : 2381.74 , "CAN" : 9984.67 , "AUS" : 7692.02 , "KAZ" : 2724.9 }, "GDP" :{ "CHN" : 12234.78 , "IND" : 2575.67 , "Usa" : 19485.39 , "IDN" : 1015.54 , "BRA" : 2055.51 , "PAK" : 302.14 , "NGA" : 375.77 , "BGD" : 245.63 , "RUS" : 1530.75 , "MEX" : 1158.23 , "JPN" : 4872.42 , "DEU" : 3693.2 , "FRA" : 2582.49 , "GBR" : 2631.23 , "ITA" : 1943.84 , "ARG" : 637.49 , "DZA" : 167.56 , "Can" : 1647.12 , "AUS" : 1408.68 , "KAZ" : 159.41 }, "CONT" :{ "CHN" : "Asia" , "IND" : "Asia" , "United states of america" : "N.America" , "IDN" : "Asia" , "BRA" : "S.America" , "PAK" : "Asia" , "NGA" : "Africa" , "BGD" : "Asia" , "RUS" : null , "MEX" : "Due north.America" , "JPN" : "Asia" , "DEU" : "Europe" , "FRA" : "Europe" , "GBR" : "Europe" , "ITA" : "Europe" , "ARG" : "Due south.America" , "DZA" : "Africa" , "CAN" : "N.America" , "AUS" : "Oceania" , "KAZ" : "Asia" }, "IND_DAY" :{ "CHN" : zero , "IND" : "1947-08-fifteen" , "The states" : "1776-07-04" , "IDN" : "1945-08-17" , "BRA" : "1822-09-07" , "PAK" : "1947-08-fourteen" , "NGA" : "1960-x-01" , "BGD" : "1971-03-26" , "RUS" : "1992-06-12" , "MEX" : "1810-09-16" , "JPN" : null , "DEU" : null , "FRA" : "1789-07-14" , "GBR" : null , "ITA" : zero , "ARG" : "1816-07-09" , "DZA" : "1962-07-05" , "Can" : "1867-07-01" , "AUS" : naught , "KAZ" : "1991-12-16" }} data-columns.json has one large dictionary with the column labels equally keys and the corresponding inner dictionaries as values.

You can get a different file structure if you laissez passer an statement for the optional parameter orient:

>>>

>>> df . to_json ( 'data-alphabetize.json' , orient = 'alphabetize' ) The orient parameter defaults to 'columns'. Hither, you've fix it to index.

Yous should get a new file data-index.json. Y'all tin expand the code cake beneath to encounter the changes:

{ "CHN" :{ "COUNTRY" : "China" , "POP" : 1398.72 , "Surface area" : 9596.96 , "Gdp" : 12234.78 , "CONT" : "Asia" , "IND_DAY" : null }, "IND" :{ "Country" : "Republic of india" , "POP" : 1351.16 , "Surface area" : 3287.26 , "Gross domestic product" : 2575.67 , "CONT" : "Asia" , "IND_DAY" : "1947-08-15" }, "USA" :{ "COUNTRY" : "US" , "POP" : 329.74 , "AREA" : 9833.52 , "GDP" : 19485.39 , "CONT" : "N.America" , "IND_DAY" : "1776-07-04" }, "IDN" :{ "State" : "Indonesia" , "Popular" : 268.07 , "AREA" : 1910.93 , "Gross domestic product" : 1015.54 , "CONT" : "Asia" , "IND_DAY" : "1945-08-17" }, "BRA" :{ "COUNTRY" : "Brazil" , "Pop" : 210.32 , "Surface area" : 8515.77 , "GDP" : 2055.51 , "CONT" : "S.America" , "IND_DAY" : "1822-09-07" }, "PAK" :{ "COUNTRY" : "Islamic republic of pakistan" , "Popular" : 205.71 , "AREA" : 881.91 , "GDP" : 302.xiv , "CONT" : "Asia" , "IND_DAY" : "1947-08-14" }, "NGA" :{ "COUNTRY" : "Nigeria" , "Popular" : 200.96 , "AREA" : 923.77 , "Gdp" : 375.77 , "CONT" : "Africa" , "IND_DAY" : "1960-10-01" }, "BGD" :{ "COUNTRY" : "People's republic of bangladesh" , "Popular" : 167.09 , "Expanse" : 147.57 , "Gross domestic product" : 245.63 , "CONT" : "Asia" , "IND_DAY" : "1971-03-26" }, "RUS" :{ "COUNTRY" : "Russia" , "Popular" : 146.79 , "Area" : 17098.25 , "Gross domestic product" : 1530.75 , "CONT" : nada , "IND_DAY" : "1992-06-12" }, "MEX" :{ "COUNTRY" : "United mexican states" , "POP" : 126.58 , "Area" : 1964.38 , "GDP" : 1158.23 , "CONT" : "Northward.America" , "IND_DAY" : "1810-09-16" }, "JPN" :{ "COUNTRY" : "Japan" , "POP" : 126.22 , "Expanse" : 377.97 , "GDP" : 4872.42 , "CONT" : "Asia" , "IND_DAY" : nix }, "DEU" :{ "State" : "Germany" , "POP" : 83.02 , "AREA" : 357.11 , "GDP" : 3693.2 , "CONT" : "Europe" , "IND_DAY" : null }, "FRA" :{ "State" : "France" , "Popular" : 67.02 , "AREA" : 640.68 , "Gdp" : 2582.49 , "CONT" : "Europe" , "IND_DAY" : "1789-07-fourteen" }, "GBR" :{ "COUNTRY" : "UK" , "POP" : 66.44 , "Area" : 242.5 , "Gross domestic product" : 2631.23 , "CONT" : "Europe" , "IND_DAY" : null }, "ITA" :{ "State" : "Italy" , "Pop" : 60.36 , "AREA" : 301.34 , "Gdp" : 1943.84 , "CONT" : "Europe" , "IND_DAY" : zero }, "ARG" :{ "COUNTRY" : "Argentine republic" , "Popular" : 44.94 , "AREA" : 2780.four , "GDP" : 637.49 , "CONT" : "Due south.America" , "IND_DAY" : "1816-07-09" }, "DZA" :{ "Land" : "Algeria" , "POP" : 43.38 , "Area" : 2381.74 , "Gross domestic product" : 167.56 , "CONT" : "Africa" , "IND_DAY" : "1962-07-05" }, "Tin can" :{ "COUNTRY" : "Canada" , "POP" : 37.59 , "AREA" : 9984.67 , "GDP" : 1647.12 , "CONT" : "N.America" , "IND_DAY" : "1867-07-01" }, "AUS" :{ "COUNTRY" : "Australia" , "Popular" : 25.47 , "Surface area" : 7692.02 , "GDP" : 1408.68 , "CONT" : "Oceania" , "IND_DAY" : cipher }, "KAZ" :{ "COUNTRY" : "Kazakhstan" , "Pop" : 18.53 , "AREA" : 2724.9 , "Gdp" : 159.41 , "CONT" : "Asia" , "IND_DAY" : "1991-12-16" }} data-index.json also has one large dictionary, but this fourth dimension the row labels are the keys, and the inner dictionaries are the values.

There are few more options for orient. 1 of them is 'records':

>>>

>>> df . to_json ( 'data-records.json' , orient = 'records' ) This lawmaking should yield the file data-records.json. You lot can expand the lawmaking block beneath to meet the content:

[{ "COUNTRY" : "Red china" , "Pop" : 1398.72 , "Surface area" : 9596.96 , "GDP" : 12234.78 , "CONT" : "Asia" , "IND_DAY" : null },{ "Land" : "India" , "POP" : 1351.16 , "AREA" : 3287.26 , "GDP" : 2575.67 , "CONT" : "Asia" , "IND_DAY" : "1947-08-15" },{ "State" : "US" , "POP" : 329.74 , "Area" : 9833.52 , "Gdp" : 19485.39 , "CONT" : "North.America" , "IND_DAY" : "1776-07-04" },{ "COUNTRY" : "Indonesia" , "Pop" : 268.07 , "Surface area" : 1910.93 , "Gross domestic product" : 1015.54 , "CONT" : "Asia" , "IND_DAY" : "1945-08-17" },{ "Land" : "Brazil" , "Pop" : 210.32 , "AREA" : 8515.77 , "GDP" : 2055.51 , "CONT" : "Due south.America" , "IND_DAY" : "1822-09-07" },{ "Land" : "Islamic republic of pakistan" , "POP" : 205.71 , "Area" : 881.91 , "Gdp" : 302.fourteen , "CONT" : "Asia" , "IND_DAY" : "1947-08-14" },{ "COUNTRY" : "Nigeria" , "POP" : 200.96 , "Expanse" : 923.77 , "Gross domestic product" : 375.77 , "CONT" : "Africa" , "IND_DAY" : "1960-10-01" },{ "COUNTRY" : "People's republic of bangladesh" , "Pop" : 167.09 , "Surface area" : 147.57 , "Gross domestic product" : 245.63 , "CONT" : "Asia" , "IND_DAY" : "1971-03-26" },{ "COUNTRY" : "Russia" , "Pop" : 146.79 , "Area" : 17098.25 , "GDP" : 1530.75 , "CONT" : null , "IND_DAY" : "1992-06-12" },{ "State" : "United mexican states" , "POP" : 126.58 , "Expanse" : 1964.38 , "GDP" : 1158.23 , "CONT" : "Due north.America" , "IND_DAY" : "1810-09-16" },{ "Country" : "Nihon" , "Pop" : 126.22 , "Area" : 377.97 , "Gdp" : 4872.42 , "CONT" : "Asia" , "IND_DAY" : zilch },{ "State" : "Federal republic of germany" , "POP" : 83.02 , "AREA" : 357.xi , "GDP" : 3693.2 , "CONT" : "Europe" , "IND_DAY" : null },{ "COUNTRY" : "France" , "Popular" : 67.02 , "AREA" : 640.68 , "GDP" : 2582.49 , "CONT" : "Europe" , "IND_DAY" : "1789-07-14" },{ "Country" : "UK" , "Popular" : 66.44 , "Area" : 242.5 , "Gdp" : 2631.23 , "CONT" : "Europe" , "IND_DAY" : null },{ "Country" : "Italia" , "POP" : 60.36 , "AREA" : 301.34 , "Gdp" : 1943.84 , "CONT" : "Europe" , "IND_DAY" : nix },{ "State" : "Argentina" , "Pop" : 44.94 , "AREA" : 2780.4 , "Gross domestic product" : 637.49 , "CONT" : "S.America" , "IND_DAY" : "1816-07-09" },{ "Land" : "People's democratic republic of algeria" , "POP" : 43.38 , "AREA" : 2381.74 , "Gross domestic product" : 167.56 , "CONT" : "Africa" , "IND_DAY" : "1962-07-05" },{ "Land" : "Canada" , "Popular" : 37.59 , "Expanse" : 9984.67 , "GDP" : 1647.12 , "CONT" : "N.America" , "IND_DAY" : "1867-07-01" },{ "Country" : "Australia" , "Pop" : 25.47 , "AREA" : 7692.02 , "GDP" : 1408.68 , "CONT" : "Oceania" , "IND_DAY" : nada },{ "COUNTRY" : "Kazakhstan" , "Pop" : eighteen.53 , "Expanse" : 2724.nine , "Gdp" : 159.41 , "CONT" : "Asia" , "IND_DAY" : "1991-12-16" }] data-records.json holds a list with i dictionary for each row. The row labels are non written.

Yous tin can become another interesting file structure with orient='split':

>>>

>>> df . to_json ( 'data-carve up.json' , orient = 'divide' ) The resulting file is data-split.json. You tin can expand the lawmaking block beneath to run across how this file should look:

{ "columns" :[ "COUNTRY" , "Popular" , "AREA" , "GDP" , "CONT" , "IND_DAY" ], "index" :[ "CHN" , "IND" , "United states of america" , "IDN" , "BRA" , "PAK" , "NGA" , "BGD" , "RUS" , "MEX" , "JPN" , "DEU" , "FRA" , "GBR" , "ITA" , "ARG" , "DZA" , "CAN" , "AUS" , "KAZ" ], "data" :[[ "Cathay" , 1398.72 , 9596.96 , 12234.78 , "Asia" , cipher ],[ "India" , 1351.xvi , 3287.26 , 2575.67 , "Asia" , "1947-08-xv" ],[ "US" , 329.74 , 9833.52 , 19485.39 , "Due north.America" , "1776-07-04" ],[ "Republic of indonesia" , 268.07 , 1910.93 , 1015.54 , "Asia" , "1945-08-17" ],[ "Brazil" , 210.32 , 8515.77 , 2055.51 , "S.America" , "1822-09-07" ],[ "Islamic republic of pakistan" , 205.71 , 881.91 , 302.fourteen , "Asia" , "1947-08-14" ],[ "Nigeria" , 200.96 , 923.77 , 375.77 , "Africa" , "1960-10-01" ],[ "Bangladesh" , 167.09 , 147.57 , 245.63 , "Asia" , "1971-03-26" ],[ "Russian federation" , 146.79 , 17098.25 , 1530.75 , null , "1992-06-12" ],[ "Mexico" , 126.58 , 1964.38 , 1158.23 , "Northward.America" , "1810-09-16" ],[ "Nippon" , 126.22 , 377.97 , 4872.42 , "Asia" , zippo ],[ "Germany" , 83.02 , 357.11 , 3693.two , "Europe" , nothing ],[ "France" , 67.02 , 640.68 , 2582.49 , "Europe" , "1789-07-xiv" ],[ "UK" , 66.44 , 242.5 , 2631.23 , "Europe" , nil ],[ "Italy" , 60.36 , 301.34 , 1943.84 , "Europe" , nothing ],[ "Argentina" , 44.94 , 2780.four , 637.49 , "S.America" , "1816-07-09" ],[ "People's democratic republic of algeria" , 43.38 , 2381.74 , 167.56 , "Africa" , "1962-07-05" ],[ "Canada" , 37.59 , 9984.67 , 1647.12 , "N.America" , "1867-07-01" ],[ "Commonwealth of australia" , 25.47 , 7692.02 , 1408.68 , "Oceania" , zilch ],[ "Kazakhstan" , 18.53 , 2724.9 , 159.41 , "Asia" , "1991-12-xvi" ]]} data-divide.json contains 1 dictionary that holds the following lists:

- The names of the columns

- The labels of the rows

- The inner lists (2-dimensional sequence) that hold data values

If you don't provide the value for the optional parameter path_or_buf that defines the file path, so .to_json() will return a JSON cord instead of writing the results to a file. This behavior is consistent with .to_csv().

There are other optional parameters you tin can employ. For example, yous can set alphabetize=Simulated to forgo saving row labels. You tin can dispense precision with double_precision, and dates with date_format and date_unit. These last two parameters are particularly important when you accept time serial among your data:

>>>

>>> df = pd . DataFrame ( data = data ) . T >>> df [ 'IND_DAY' ] = pd . to_datetime ( df [ 'IND_DAY' ]) >>> df . dtypes COUNTRY object Popular object Expanse object Gdp object CONT object IND_DAY datetime64[ns] dtype: object >>> df . to_json ( 'data-fourth dimension.json' ) In this example, yous've created the DataFrame from the dictionary data and used to_datetime() to catechumen the values in the last column to datetime64. You tin can expand the lawmaking block below to see the resulting file:

{ "Land" :{ "CHN" : "China" , "IND" : "India" , "Us" : "Us" , "IDN" : "Republic of indonesia" , "BRA" : "Brazil" , "PAK" : "Pakistan" , "NGA" : "Nigeria" , "BGD" : "People's republic of bangladesh" , "RUS" : "Russia" , "MEX" : "Mexico" , "JPN" : "Japan" , "DEU" : "Frg" , "FRA" : "French republic" , "GBR" : "United kingdom" , "ITA" : "Italy" , "ARG" : "Argentina" , "DZA" : "People's democratic republic of algeria" , "CAN" : "Canada" , "AUS" : "Australia" , "KAZ" : "Kazakhstan" }, "Popular" :{ "CHN" : 1398.72 , "IND" : 1351.16 , "U.s.a." : 329.74 , "IDN" : 268.07 , "BRA" : 210.32 , "PAK" : 205.71 , "NGA" : 200.96 , "BGD" : 167.09 , "RUS" : 146.79 , "MEX" : 126.58 , "JPN" : 126.22 , "DEU" : 83.02 , "FRA" : 67.02 , "GBR" : 66.44 , "ITA" : lx.36 , "ARG" : 44.94 , "DZA" : 43.38 , "CAN" : 37.59 , "AUS" : 25.47 , "KAZ" : eighteen.53 }, "AREA" :{ "CHN" : 9596.96 , "IND" : 3287.26 , "USA" : 9833.52 , "IDN" : 1910.93 , "BRA" : 8515.77 , "PAK" : 881.91 , "NGA" : 923.77 , "BGD" : 147.57 , "RUS" : 17098.25 , "MEX" : 1964.38 , "JPN" : 377.97 , "DEU" : 357.eleven , "FRA" : 640.68 , "GBR" : 242.five , "ITA" : 301.34 , "ARG" : 2780.four , "DZA" : 2381.74 , "CAN" : 9984.67 , "AUS" : 7692.02 , "KAZ" : 2724.ix }, "GDP" :{ "CHN" : 12234.78 , "IND" : 2575.67 , "USA" : 19485.39 , "IDN" : 1015.54 , "BRA" : 2055.51 , "PAK" : 302.xiv , "NGA" : 375.77 , "BGD" : 245.63 , "RUS" : 1530.75 , "MEX" : 1158.23 , "JPN" : 4872.42 , "DEU" : 3693.2 , "FRA" : 2582.49 , "GBR" : 2631.23 , "ITA" : 1943.84 , "ARG" : 637.49 , "DZA" : 167.56 , "Tin" : 1647.12 , "AUS" : 1408.68 , "KAZ" : 159.41 }, "CONT" :{ "CHN" : "Asia" , "IND" : "Asia" , "USA" : "North.America" , "IDN" : "Asia" , "BRA" : "S.America" , "PAK" : "Asia" , "NGA" : "Africa" , "BGD" : "Asia" , "RUS" : zip , "MEX" : "Northward.America" , "JPN" : "Asia" , "DEU" : "Europe" , "FRA" : "Europe" , "GBR" : "Europe" , "ITA" : "Europe" , "ARG" : "Southward.America" , "DZA" : "Africa" , "Tin" : "N.America" , "AUS" : "Oceania" , "KAZ" : "Asia" }, "IND_DAY" :{ "CHN" : null , "IND" : -706320000000 , "USA" : -6106060800000 , "IDN" : -769219200000 , "BRA" : -4648924800000 , "PAK" : -706406400000 , "NGA" : -291945600000 , "BGD" : 38793600000 , "RUS" : 708307200000 , "MEX" : -5026838400000 , "JPN" : null , "DEU" : nothing , "FRA" : -5694969600000 , "GBR" : goose egg , "ITA" : nothing , "ARG" : -4843411200000 , "DZA" : -236476800000 , "Tin can" : -3234729600000 , "AUS" : goose egg , "KAZ" : 692841600000 }} In this file, you have large integers instead of dates for the independence days. That'due south because the default value of the optional parameter date_format is 'epoch' whenever orient isn't 'tabular array'. This default beliefs expresses dates as an epoch in milliseconds relative to midnight on January 1, 1970.

However, if you pass date_format='iso', then you'll get the dates in the ISO 8601 format. In addition, date_unit decides the units of fourth dimension:

>>>

>>> df = pd . DataFrame ( information = data ) . T >>> df [ 'IND_DAY' ] = pd . to_datetime ( df [ 'IND_DAY' ]) >>> df . to_json ( 'new-data-time.json' , date_format = 'iso' , date_unit = 's' ) This code produces the following JSON file:

{ "Country" :{ "CHN" : "China" , "IND" : "India" , "The states" : "US" , "IDN" : "Indonesia" , "BRA" : "Brazil" , "PAK" : "Pakistan" , "NGA" : "Nigeria" , "BGD" : "Bangladesh" , "RUS" : "Russian federation" , "MEX" : "United mexican states" , "JPN" : "Japan" , "DEU" : "Germany" , "FRA" : "France" , "GBR" : "Great britain" , "ITA" : "Italy" , "ARG" : "Argentine republic" , "DZA" : "Algeria" , "Tin can" : "Canada" , "AUS" : "Australia" , "KAZ" : "Kazakhstan" }, "Pop" :{ "CHN" : 1398.72 , "IND" : 1351.16 , "USA" : 329.74 , "IDN" : 268.07 , "BRA" : 210.32 , "PAK" : 205.71 , "NGA" : 200.96 , "BGD" : 167.09 , "RUS" : 146.79 , "MEX" : 126.58 , "JPN" : 126.22 , "DEU" : 83.02 , "FRA" : 67.02 , "GBR" : 66.44 , "ITA" : 60.36 , "ARG" : 44.94 , "DZA" : 43.38 , "Tin" : 37.59 , "AUS" : 25.47 , "KAZ" : 18.53 }, "AREA" :{ "CHN" : 9596.96 , "IND" : 3287.26 , "USA" : 9833.52 , "IDN" : 1910.93 , "BRA" : 8515.77 , "PAK" : 881.91 , "NGA" : 923.77 , "BGD" : 147.57 , "RUS" : 17098.25 , "MEX" : 1964.38 , "JPN" : 377.97 , "DEU" : 357.11 , "FRA" : 640.68 , "GBR" : 242.5 , "ITA" : 301.34 , "ARG" : 2780.4 , "DZA" : 2381.74 , "CAN" : 9984.67 , "AUS" : 7692.02 , "KAZ" : 2724.9 }, "GDP" :{ "CHN" : 12234.78 , "IND" : 2575.67 , "USA" : 19485.39 , "IDN" : 1015.54 , "BRA" : 2055.51 , "PAK" : 302.14 , "NGA" : 375.77 , "BGD" : 245.63 , "RUS" : 1530.75 , "MEX" : 1158.23 , "JPN" : 4872.42 , "DEU" : 3693.2 , "FRA" : 2582.49 , "GBR" : 2631.23 , "ITA" : 1943.84 , "ARG" : 637.49 , "DZA" : 167.56 , "Tin can" : 1647.12 , "AUS" : 1408.68 , "KAZ" : 159.41 }, "CONT" :{ "CHN" : "Asia" , "IND" : "Asia" , "USA" : "Due north.America" , "IDN" : "Asia" , "BRA" : "Southward.America" , "PAK" : "Asia" , "NGA" : "Africa" , "BGD" : "Asia" , "RUS" : null , "MEX" : "North.America" , "JPN" : "Asia" , "DEU" : "Europe" , "FRA" : "Europe" , "GBR" : "Europe" , "ITA" : "Europe" , "ARG" : "S.America" , "DZA" : "Africa" , "CAN" : "Northward.America" , "AUS" : "Oceania" , "KAZ" : "Asia" }, "IND_DAY" :{ "CHN" : null , "IND" : "1947-08-15T00:00:00Z" , "USA" : "1776-07-04T00:00:00Z" , "IDN" : "1945-08-17T00:00:00Z" , "BRA" : "1822-09-07T00:00:00Z" , "PAK" : "1947-08-14T00:00:00Z" , "NGA" : "1960-ten-01T00:00:00Z" , "BGD" : "1971-03-26T00:00:00Z" , "RUS" : "1992-06-12T00:00:00Z" , "MEX" : "1810-09-16T00:00:00Z" , "JPN" : null , "DEU" : null , "FRA" : "1789-07-14T00:00:00Z" , "GBR" : null , "ITA" : cipher , "ARG" : "1816-07-09T00:00:00Z" , "DZA" : "1962-07-05T00:00:00Z" , "Tin can" : "1867-07-01T00:00:00Z" , "AUS" : null , "KAZ" : "1991-12-16T00:00:00Z" }} The dates in the resulting file are in the ISO 8601 format.

Yous can load the information from a JSON file with read_json():

>>>

>>> df = pd . read_json ( 'information-index.json' , orient = 'index' , ... convert_dates = [ 'IND_DAY' ]) The parameter convert_dates has a similar purpose as parse_dates when yous use it to read CSV files. The optional parameter orient is very important because it specifies how Pandas understands the structure of the file.

At that place are other optional parameters you can use besides:

- Set the encoding with

encoding. - Dispense dates with

convert_datesandkeep_default_dates. - Touch on precision with

dtypeandprecise_float. - Decode numeric information straight to NumPy arrays with

numpy=True.

Annotation that you lot might lose the order of rows and columns when using the JSON format to store your data.

HTML Files

An HTML is a plaintext file that uses hypertext markup linguistic communication to help browsers render web pages. The extensions for HTML files are .html and .htm. Y'all'll need to install an HTML parser library like lxml or html5lib to be able to work with HTML files:

$pip install lxml html5lib You can also use Conda to install the same packages:

$ conda install lxml html5lib One time you take these libraries, you lot can salvage the contents of your DataFrame every bit an HTML file with .to_html():

>>>

df = pd.DataFrame(data=information).T df.to_html('data.html') This code generates a file data.html. You tin expand the code block below to see how this file should look:

< table border = "1" class = "dataframe" > < thead > < tr manner = "text-align: right;" > < thursday ></ thursday > < th >Land</ th > < th >POP</ th > < th >Area</ th > < th >Gdp</ thursday > < th >CONT</ thursday > < th >IND_DAY</ th > </ tr > </ thead > < tbody > < tr > < th >CHN</ thursday > < td >China</ td > < td >1398.72</ td > < td >9596.96</ td > < td >12234.eight</ td > < td >Asia</ td > < td >NaN</ td > </ tr > < tr > < th >IND</ th > < td >Bharat</ td > < td >1351.xvi</ td > < td >3287.26</ td > < td >2575.67</ td > < td >Asia</ td > < td >1947-08-15</ td > </ tr > < tr > < th >Us</ th > < td >The states</ td > < td >329.74</ td > < td >9833.52</ td > < td >19485.four</ td > < td >N.America</ td > < td >1776-07-04</ td > </ tr > < tr > < th >IDN</ th > < td >Republic of indonesia</ td > < td >268.07</ td > < td >1910.93</ td > < td >1015.54</ td > < td >Asia</ td > < td >1945-08-17</ td > </ tr > < tr > < th >BRA</ th > < td >Brazil</ td > < td >210.32</ td > < td >8515.77</ td > < td >2055.51</ td > < td >Southward.America</ td > < td >1822-09-07</ td > </ tr > < tr > < th >PAK</ thursday > < td >Pakistan</ td > < td >205.71</ td > < td >881.91</ td > < td >302.14</ td > < td >Asia</ td > < td >1947-08-14</ td > </ tr > < tr > < th >NGA</ thursday > < td >Nigeria</ td > < td >200.96</ td > < td >923.77</ td > < td >375.77</ td > < td >Africa</ td > < td >1960-10-01</ td > </ tr > < tr > < th >BGD</ thursday > < td >Bangladesh</ td > < td >167.09</ td > < td >147.57</ td > < td >245.63</ td > < td >Asia</ td > < td >1971-03-26</ td > </ tr > < tr > < th >RUS</ th > < td >Russia</ td > < td >146.79</ td > < td >17098.2</ td > < td >1530.75</ td > < td >NaN</ td > < td >1992-06-12</ td > </ tr > < tr > < th >MEX</ th > < td >Mexico</ td > < td >126.58</ td > < td >1964.38</ td > < td >1158.23</ td > < td >N.America</ td > < td >1810-09-16</ td > </ tr > < tr > < th >JPN</ th > < td >Japan</ td > < td >126.22</ td > < td >377.97</ td > < td >4872.42</ td > < td >Asia</ td > < td >NaN</ td > </ tr > < tr > < th >DEU</ th > < td >Germany</ td > < td >83.02</ td > < td >357.11</ td > < td >3693.two</ td > < td >Europe</ td > < td >NaN</ td > </ tr > < tr > < thursday >FRA</ th > < td >France</ td > < td >67.02</ td > < td >640.68</ td > < td >2582.49</ td > < td >Europe</ td > < td >1789-07-fourteen</ td > </ tr > < tr > < th >GBR</ thursday > < td >United kingdom</ td > < td >66.44</ td > < td >242.5</ td > < td >2631.23</ td > < td >Europe</ td > < td >NaN</ td > </ tr > < tr > < th >ITA</ th > < td >Italy</ td > < td >sixty.36</ td > < td >301.34</ td > < td >1943.84</ td > < td >Europe</ td > < td >NaN</ td > </ tr > < tr > < th >ARG</ thursday > < td >Argentina</ td > < td >44.94</ td > < td >2780.4</ td > < td >637.49</ td > < td >S.America</ td > < td >1816-07-09</ td > </ tr > < tr > < th >DZA</ th > < td >People's democratic republic of algeria</ td > < td >43.38</ td > < td >2381.74</ td > < td >167.56</ td > < td >Africa</ td > < td >1962-07-05</ td > </ tr > < tr > < thursday >Tin can</ th > < td >Canada</ td > < td >37.59</ td > < td >9984.67</ td > < td >1647.12</ td > < td >N.America</ td > < td >1867-07-01</ td > </ tr > < tr > < th >AUS</ th > < td >Commonwealth of australia</ td > < td >25.47</ td > < td >7692.02</ td > < td >1408.68</ td > < td >Oceania</ td > < td >NaN</ td > </ tr > < tr > < th >KAZ</ th > < td >Kazakhstan</ td > < td >18.53</ td > < td >2724.nine</ td > < td >159.41</ td > < td >Asia</ td > < td >1991-12-xvi</ td > </ tr > </ tbody > </ table > This file shows the DataFrame contents nicely. However, detect that you haven't obtained an entire spider web page. You've but output the information that corresponds to df in the HTML format.

.to_html() won't create a file if you lot don't provide the optional parameter buf, which denotes the buffer to write to. If you leave this parameter out, then your code will return a string equally information technology did with .to_csv() and .to_json().

Here are some other optional parameters:

-

headerdetermines whether to save the column names. -

indexdetermines whether to save the row labels. -

classesassigns cascading fashion canvass (CSS) classes. -

render_linksspecifies whether to convert URLs to HTML links. -

table_idassigns the CSSidto thetabular arraytag. -

escapedecides whether to convert the characters<,>, and&to HTML-safe strings.

You use parameters like these to specify different aspects of the resulting files or strings.

Y'all tin create a DataFrame object from a suitable HTML file using read_html(), which will render a DataFrame instance or a list of them:

>>>

>>> df = pd . read_html ( 'data.html' , index_col = 0 , parse_dates = [ 'IND_DAY' ]) This is very like to what you did when reading CSV files. You also have parameters that assist you work with dates, missing values, precision, encoding, HTML parsers, and more.

Excel Files

Y'all've already learned how to read and write Excel files with Pandas. However, there are a few more options worth considering. For 1, when you utilize .to_excel(), you can specify the name of the target worksheet with the optional parameter sheet_name:

>>>

>>> df = pd . DataFrame ( data = information ) . T >>> df . to_excel ( 'data.xlsx' , sheet_name = 'COUNTRIES' ) Here, you create a file information.xlsx with a worksheet called COUNTRIES that stores the data. The string 'data.xlsx' is the argument for the parameter excel_writer that defines the name of the Excel file or its path.

The optional parameters startrow and startcol both default to 0 and indicate the upper left-nigh cell where the data should start being written:

>>>

>>> df . to_excel ( 'information-shifted.xlsx' , sheet_name = 'COUNTRIES' , ... startrow = 2 , startcol = 4 ) Here, you specify that the table should start in the third row and the fifth column. Y'all also used nix-based indexing, and then the third row is denoted by 2 and the fifth column past iv.

Now the resulting worksheet looks like this:

As you can see, the table starts in the third row 2 and the fifth cavalcade East.

.read_excel() likewise has the optional parameter sheet_name that specifies which worksheets to read when loading data. It tin accept on one of the following values:

- The zip-based index of the worksheet

- The proper name of the worksheet

- The list of indices or names to read multiple sheets

- The value

Noneto read all sheets

Hither'south how you would use this parameter in your lawmaking:

>>>

>>> df = pd . read_excel ( 'information.xlsx' , sheet_name = 0 , index_col = 0 , ... parse_dates = [ 'IND_DAY' ]) >>> df = pd . read_excel ( 'information.xlsx' , sheet_name = 'COUNTRIES' , index_col = 0 , ... parse_dates = [ 'IND_DAY' ]) Both statements above create the same DataFrame because the sheet_name parameters take the same values. In both cases, sheet_name=0 and sheet_name='COUNTRIES' refer to the same worksheet. The argument parse_dates=['IND_DAY'] tells Pandas to endeavour to consider the values in this column equally dates or times.

At that place are other optional parameters you can apply with .read_excel() and .to_excel() to determine the Excel engine, the encoding, the way to handle missing values and infinities, the method for writing column names and row labels, and so on.

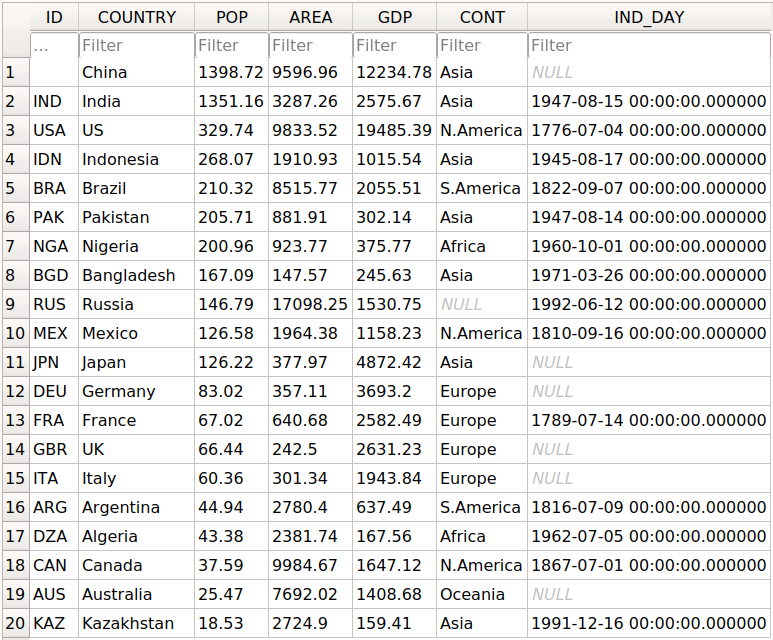

SQL Files

Pandas IO tools tin also read and write databases. In this adjacent instance, y'all'll write your information to a database called data.db. To get started, you'll need the SQLAlchemy package. To learn more about information technology, you can read the official ORM tutorial. You'll as well demand the database driver. Python has a built-in driver for SQLite.

You can install SQLAlchemy with pip:

You tin can as well install information technology with Conda:

$ conda install sqlalchemy Once y'all have SQLAlchemy installed, import create_engine() and create a database engine:

>>>

>>> from sqlalchemy import create_engine >>> engine = create_engine ( 'sqlite:///data.db' , echo = Simulated ) At present that y'all take everything ready, the side by side pace is to create a DataFrame object. Information technology'southward convenient to specify the data types and apply .to_sql().

>>>

>>> dtypes = { 'POP' : 'float64' , 'AREA' : 'float64' , 'Gross domestic product' : 'float64' , ... 'IND_DAY' : 'datetime64' } >>> df = pd . DataFrame ( information = data ) . T . astype ( dtype = dtypes ) >>> df . dtypes State object Popular float64 Expanse float64 GDP float64 CONT object IND_DAY datetime64[ns] dtype: object .astype() is a very convenient method you tin utilize to set multiple information types at once.

Once yous've created your DataFrame, you tin save it to the database with .to_sql():

>>>

>>> df . to_sql ( 'data.db' , con = engine , index_label = 'ID' ) The parameter con is used to specify the database connectedness or engine that yous want to apply. The optional parameter index_label specifies how to call the database cavalcade with the row labels. You lot'll oftentimes see it take on the value ID, Id, or id.

You lot should get the database data.db with a single table that looks similar this:

The get-go cavalcade contains the row labels. To omit writing them into the database, pass index=False to .to_sql(). The other columns correspond to the columns of the DataFrame.

There are a few more optional parameters. For example, yous can use schema to specify the database schema and dtype to determine the types of the database columns. You can also use if_exists, which says what to do if a database with the same name and path already exists:

-

if_exists='neglect'raises a ValueError and is the default. -

if_exists='replace'drops the table and inserts new values. -

if_exists='append'inserts new values into the table.

Yous can load the information from the database with read_sql():

>>>

>>> df = pd . read_sql ( 'data.db' , con = engine , index_col = 'ID' ) >>> df Country POP Expanse Gross domestic product CONT IND_DAY ID CHN Mainland china 1398.72 9596.96 12234.78 Asia NaT IND India 1351.sixteen 3287.26 2575.67 Asia 1947-08-fifteen U.s.a. United states 329.74 9833.52 19485.39 N.America 1776-07-04 IDN Indonesia 268.07 1910.93 1015.54 Asia 1945-08-17 BRA Brazil 210.32 8515.77 2055.51 S.America 1822-09-07 PAK Pakistan 205.71 881.91 302.14 Asia 1947-08-14 NGA Nigeria 200.96 923.77 375.77 Africa 1960-10-01 BGD People's republic of bangladesh 167.09 147.57 245.63 Asia 1971-03-26 RUS Russia 146.79 17098.25 1530.75 None 1992-06-12 MEX Mexico 126.58 1964.38 1158.23 N.America 1810-09-sixteen JPN Japan 126.22 377.97 4872.42 Asia NaT DEU Deutschland 83.02 357.11 3693.20 Europe NaT FRA France 67.02 640.68 2582.49 Europe 1789-07-14 GBR Britain 66.44 242.l 2631.23 Europe NaT ITA Italian republic 60.36 301.34 1943.84 Europe NaT ARG Argentina 44.94 2780.40 637.49 S.America 1816-07-09 DZA People's democratic republic of algeria 43.38 2381.74 167.56 Africa 1962-07-05 CAN Canada 37.59 9984.67 1647.12 N.America 1867-07-01 AUS Australia 25.47 7692.02 1408.68 Oceania NaT KAZ Kazakhstan 18.53 2724.xc 159.41 Asia 1991-12-sixteen The parameter index_col specifies the name of the column with the row labels. Annotation that this inserts an extra row after the header that starts with ID. Yous can set this behavior with the post-obit line of code:

>>>

>>> df . index . name = None >>> df Land POP AREA Gdp CONT IND_DAY CHN Cathay 1398.72 9596.96 12234.78 Asia NaT IND India 1351.sixteen 3287.26 2575.67 Asia 1947-08-fifteen The states US 329.74 9833.52 19485.39 N.America 1776-07-04 IDN Indonesia 268.07 1910.93 1015.54 Asia 1945-08-17 BRA Brazil 210.32 8515.77 2055.51 S.America 1822-09-07 PAK Pakistan 205.71 881.91 302.14 Asia 1947-08-14 NGA Nigeria 200.96 923.77 375.77 Africa 1960-10-01 BGD People's republic of bangladesh 167.09 147.57 245.63 Asia 1971-03-26 RUS Russia 146.79 17098.25 1530.75 None 1992-06-12 MEX Mexico 126.58 1964.38 1158.23 North.America 1810-09-16 JPN Japan 126.22 377.97 4872.42 Asia NaT DEU Germany 83.02 357.xi 3693.20 Europe NaT FRA French republic 67.02 640.68 2582.49 Europe 1789-07-14 GBR UK 66.44 242.fifty 2631.23 Europe NaT ITA Italia 60.36 301.34 1943.84 Europe NaT ARG Argentina 44.94 2780.forty 637.49 S.America 1816-07-09 DZA Algeria 43.38 2381.74 167.56 Africa 1962-07-05 CAN Canada 37.59 9984.67 1647.12 N.America 1867-07-01 AUS Australia 25.47 7692.02 1408.68 Oceania NaT KAZ Kazakhstan 18.53 2724.90 159.41 Asia 1991-12-xvi Now you accept the same DataFrame object as before.

Note that the continent for Russia is now None instead of nan. If yous want to fill the missing values with nan, and so yous can utilise .fillna():

>>>

>>> df . fillna ( value = bladder ( 'nan' ), inplace = Truthful ) .fillna() replaces all missing values with any you laissez passer to value. Hither, you passed bladder('nan'), which says to fill all missing values with nan.

Also note that yous didn't have to pass parse_dates=['IND_DAY'] to read_sql(). That's because your database was able to detect that the terminal column contains dates. Nevertheless, you can laissez passer parse_dates if you lot'd like. You'll get the aforementioned results.

In that location are other functions that y'all can utilise to read databases, like read_sql_table() and read_sql_query(). Feel gratuitous to try them out!

Pickle Files

Pickling is the human action of converting Python objects into byte streams. Unpickling is the inverse process. Python pickle files are the binary files that go along the data and hierarchy of Python objects. They usually accept the extension .pickle or .pkl.

You tin salve your DataFrame in a pickle file with .to_pickle():

>>>

>>> dtypes = { 'Popular' : 'float64' , 'Surface area' : 'float64' , 'GDP' : 'float64' , ... 'IND_DAY' : 'datetime64' } >>> df = pd . DataFrame ( information = data ) . T . astype ( dtype = dtypes ) >>> df . to_pickle ( 'data.pickle' ) Like you lot did with databases, it can be convenient first to specify the information types. Then, yous create a file information.pickle to incorporate your data. You could also pass an integer value to the optional parameter protocol, which specifies the protocol of the pickler.

You can go the data from a pickle file with read_pickle():

>>>

>>> df = pd . read_pickle ( 'information.pickle' ) >>> df Land Popular AREA GDP CONT IND_DAY CHN People's republic of china 1398.72 9596.96 12234.78 Asia NaT IND India 1351.xvi 3287.26 2575.67 Asia 1947-08-fifteen United states U.s. 329.74 9833.52 19485.39 N.America 1776-07-04 IDN Indonesia 268.07 1910.93 1015.54 Asia 1945-08-17 BRA Brazil 210.32 8515.77 2055.51 Southward.America 1822-09-07 PAK Pakistan 205.71 881.91 302.xiv Asia 1947-08-14 NGA Nigeria 200.96 923.77 375.77 Africa 1960-ten-01 BGD Bangladesh 167.09 147.57 245.63 Asia 1971-03-26 RUS Russia 146.79 17098.25 1530.75 NaN 1992-06-12 MEX Mexico 126.58 1964.38 1158.23 Due north.America 1810-09-16 JPN Japan 126.22 377.97 4872.42 Asia NaT DEU Deutschland 83.02 357.11 3693.20 Europe NaT FRA France 67.02 640.68 2582.49 Europe 1789-07-14 GBR Great britain 66.44 242.50 2631.23 Europe NaT ITA Italy 60.36 301.34 1943.84 Europe NaT ARG Argentina 44.94 2780.40 637.49 Southward.America 1816-07-09 DZA People's democratic republic of algeria 43.38 2381.74 167.56 Africa 1962-07-05 Can Canada 37.59 9984.67 1647.12 North.America 1867-07-01 AUS Australia 25.47 7692.02 1408.68 Oceania NaT KAZ Republic of kazakhstan 18.53 2724.90 159.41 Asia 1991-12-sixteen read_pickle() returns the DataFrame with the stored data. You can also check the data types:

>>>

>>> df . dtypes COUNTRY object POP float64 AREA float64 GDP float64 CONT object IND_DAY datetime64[ns] dtype: object These are the aforementioned ones that you lot specified before using .to_pickle().

As a word of caution, you should always beware of loading pickles from untrusted sources. This can exist dangerous! When you lot unpickle an untrustworthy file, it could execute arbitrary lawmaking on your machine, gain remote access to your computer, or otherwise exploit your device in other ways.

Working With Large Data

If your files are too large for saving or processing, then there are several approaches you tin can take to reduce the required disk space:

- Shrink your files

- Cull only the columns you desire

- Omit the rows you don't demand

- Strength the use of less precise data types

- Dissever the data into chunks

You lot'll accept a look at each of these techniques in turn.

Compress and Decompress Files

You can create an archive file like you would a regular one, with the addition of a suffix that corresponds to the desired compression type:

-

'.gz' -

'.bz2' -

'.zilch' -

'.xz'

Pandas can deduce the pinch blazon by itself:

>>>

>>> df = pd . DataFrame ( data = data ) . T >>> df . to_csv ( 'data.csv.zero' ) Here, you lot create a compressed .csv file equally an annal. The size of the regular .csv file is 1048 bytes, while the compressed file only has 766 bytes.

You can open this compressed file equally usual with the Pandas read_csv() function:

>>>

>>> df = pd . read_csv ( 'data.csv.aught' , index_col = 0 , ... parse_dates = [ 'IND_DAY' ]) >>> df Land Pop AREA Gross domestic product CONT IND_DAY CHN Mainland china 1398.72 9596.96 12234.78 Asia NaT IND India 1351.16 3287.26 2575.67 Asia 1947-08-xv U.s.a. US 329.74 9833.52 19485.39 N.America 1776-07-04 IDN Republic of indonesia 268.07 1910.93 1015.54 Asia 1945-08-17 BRA Brazil 210.32 8515.77 2055.51 South.America 1822-09-07 PAK Islamic republic of pakistan 205.71 881.91 302.xiv Asia 1947-08-fourteen NGA Nigeria 200.96 923.77 375.77 Africa 1960-10-01 BGD Bangladesh 167.09 147.57 245.63 Asia 1971-03-26 RUS Russian federation 146.79 17098.25 1530.75 NaN 1992-06-12 MEX Mexico 126.58 1964.38 1158.23 N.America 1810-09-sixteen JPN Japan 126.22 377.97 4872.42 Asia NaT DEU Germany 83.02 357.eleven 3693.20 Europe NaT FRA France 67.02 640.68 2582.49 Europe 1789-07-14 GBR UK 66.44 242.l 2631.23 Europe NaT ITA Italy sixty.36 301.34 1943.84 Europe NaT ARG Argentina 44.94 2780.40 637.49 South.America 1816-07-09 DZA Algeria 43.38 2381.74 167.56 Africa 1962-07-05 CAN Canada 37.59 9984.67 1647.12 N.America 1867-07-01 AUS Commonwealth of australia 25.47 7692.02 1408.68 Oceania NaT KAZ Kazakhstan 18.53 2724.90 159.41 Asia 1991-12-sixteen read_csv() decompresses the file before reading it into a DataFrame.

You can specify the type of compression with the optional parameter compression, which can take on whatever of the post-obit values:

-

'infer' -

'gzip' -

'bz2' -

'zippo' -

'xz' -

None

The default value compression='infer' indicates that Pandas should deduce the compression type from the file extension.

Hither's how yous would compress a pickle file:

>>>

>>> df = pd . DataFrame ( data = information ) . T >>> df . to_pickle ( 'data.pickle.compress' , compression = 'gzip' ) You should become the file information.pickle.compress that you can later decompress and read:

>>>

>>> df = pd . read_pickle ( 'data.pickle.shrink' , pinch = 'gzip' ) df over again corresponds to the DataFrame with the same information every bit before.

You tin can requite the other compression methods a endeavor, besides. If you're using pickle files, so continue in mind that the .zip format supports reading only.

Cull Columns

The Pandas read_csv() and read_excel() functions accept the optional parameter usecols that you can use to specify the columns you lot want to load from the file. You can laissez passer the list of column names equally the corresponding statement:

>>>

>>> df = pd . read_csv ( 'data.csv' , usecols = [ 'Country' , 'Expanse' ]) >>> df COUNTRY AREA 0 China 9596.96 1 Republic of india 3287.26 2 Us 9833.52 3 Republic of indonesia 1910.93 4 Brazil 8515.77 5 Pakistan 881.91 6 Nigeria 923.77 7 Bangladesh 147.57 eight Russian federation 17098.25 ix Mexico 1964.38 10 Nippon 377.97 11 Germany 357.11 12 France 640.68 13 United kingdom 242.l 14 Italian republic 301.34 15 Argentine republic 2780.40 16 Algeria 2381.74 17 Canada 9984.67 18 Australia 7692.02 nineteen Kazakhstan 2724.ninety At present you take a DataFrame that contains less data than earlier. Here, there are just the names of the countries and their areas.

Instead of the column names, you tin also laissez passer their indices:

>>>

>>> df = pd . read_csv ( 'data.csv' , index_col = 0 , usecols = [ 0 , i , 3 ]) >>> df COUNTRY Area CHN Communist china 9596.96 IND India 3287.26 The states U.s. 9833.52 IDN Indonesia 1910.93 BRA Brazil 8515.77 PAK Pakistan 881.91 NGA Nigeria 923.77 BGD Bangladesh 147.57 RUS Russia 17098.25 MEX United mexican states 1964.38 JPN Japan 377.97 DEU Germany 357.11 FRA French republic 640.68 GBR United kingdom 242.50 ITA Italy 301.34 ARG Argentina 2780.forty DZA Algeria 2381.74 CAN Canada 9984.67 AUS Australia 7692.02 KAZ Kazakhstan 2724.90 Expand the code block below to compare these results with the file 'information.csv':

,COUNTRY,Popular,AREA,Gross domestic product,CONT,IND_DAY CHN,Mainland china,1398.72,9596.96,12234.78,Asia, IND,India,1351.sixteen,3287.26,2575.67,Asia,1947-08-15 USA,US,329.74,9833.52,19485.39,N.America,1776-07-04 IDN,Indonesia,268.07,1910.93,1015.54,Asia,1945-08-17 BRA,Brazil,210.32,8515.77,2055.51,S.America,1822-09-07 PAK,Pakistan,205.71,881.91,302.14,Asia,1947-08-fourteen NGA,Nigeria,200.96,923.77,375.77,Africa,1960-10-01 BGD,People's republic of bangladesh,167.09,147.57,245.63,Asia,1971-03-26 RUS,Russia,146.79,17098.25,1530.75,,1992-06-12 MEX,United mexican states,126.58,1964.38,1158.23,Due north.America,1810-09-16 JPN,Japan,126.22,377.97,4872.42,Asia, DEU,Germany,83.02,357.11,3693.two,Europe, FRA,French republic,67.02,640.68,2582.49,Europe,1789-07-xiv GBR,UK,66.44,242.five,2631.23,Europe, ITA,Italy,60.36,301.34,1943.84,Europe, ARG,Argentina,44.94,2780.4,637.49,S.America,1816-07-09 DZA,Algeria,43.38,2381.74,167.56,Africa,1962-07-05 CAN,Canada,37.59,9984.67,1647.12,N.America,1867-07-01 AUS,Australia,25.47,7692.02,1408.68,Oceania, KAZ,Republic of kazakhstan,xviii.53,2724.9,159.41,Asia,1991-12-sixteen You tin run into the following columns:

- The column at alphabetize

0contains the row labels. - The column at index

1contains the land names. - The column at index

3contains the areas.

Simlarly, read_sql() has the optional parameter columns that takes a listing of column names to read:

>>>

>>> df = pd . read_sql ( 'data.db' , con = engine , index_col = 'ID' , ... columns = [ 'Country' , 'Area' ]) >>> df . index . name = None >>> df COUNTRY AREA CHN China 9596.96 IND India 3287.26 USA Usa 9833.52 IDN Indonesia 1910.93 BRA Brazil 8515.77 PAK Pakistan 881.91 NGA Nigeria 923.77 BGD Bangladesh 147.57 RUS Russian federation 17098.25 MEX Mexico 1964.38 JPN Japan 377.97 DEU Germany 357.11 FRA France 640.68 GBR UK 242.50 ITA Italian republic 301.34 ARG Argentina 2780.xl DZA Algeria 2381.74 Tin can Canada 9984.67 AUS Australia 7692.02 KAZ Kazakhstan 2724.xc Once more, the DataFrame just contains the columns with the names of the countries and areas. If columns is None or omitted, then all of the columns will be read, every bit yous saw before. The default behavior is columns=None.

Omit Rows

When you examination an algorithm for data processing or machine learning, you often don't need the unabridged dataset. It's convenient to load only a subset of the data to speed up the process. The Pandas read_csv() and read_excel() functions have some optional parameters that allow you to select which rows you want to load:

-

skiprows: either the number of rows to skip at the get-go of the file if information technology's an integer, or the nix-based indices of the rows to skip if it's a list-similar object -

skipfooter: the number of rows to skip at the stop of the file -

nrows: the number of rows to read

Here's how you would skip rows with odd zero-based indices, keeping the even ones:

>>>

>>> df = pd . read_csv ( 'information.csv' , index_col = 0 , skiprows = range ( 1 , xx , 2 )) >>> df COUNTRY POP Surface area GDP CONT IND_DAY IND India 1351.sixteen 3287.26 2575.67 Asia 1947-08-15 IDN Indonesia 268.07 1910.93 1015.54 Asia 1945-08-17 PAK Pakistan 205.71 881.91 302.xiv Asia 1947-08-14 BGD Bangladesh 167.09 147.57 245.63 Asia 1971-03-26 MEX Mexico 126.58 1964.38 1158.23 N.America 1810-09-16 DEU Germany 83.02 357.11 3693.xx Europe NaN GBR United kingdom 66.44 242.50 2631.23 Europe NaN ARG Argentina 44.94 2780.xl 637.49 S.America 1816-07-09 Tin Canada 37.59 9984.67 1647.12 N.America 1867-07-01 KAZ Kazakhstan eighteen.53 2724.ninety 159.41 Asia 1991-12-16 In this example, skiprows is range(ane, 20, 2) and corresponds to the values i, 3, …, 19. The instances of the Python built-in class range behave like sequences. The commencement row of the file data.csv is the header row. Information technology has the index 0, and so Pandas loads it in. The second row with index one corresponds to the label CHN, and Pandas skips it. The third row with the index 2 and label IND is loaded, and so on.

If you desire to cull rows randomly, and then skiprows can be a list or NumPy assortment with pseudo-random numbers, obtained either with pure Python or with NumPy.

Force Less Precise Data Types

If yous're okay with less precise data types, then you can potentially save a meaning amount of memory! Showtime, go the data types with .dtypes over again:

>>>

>>> df = pd . read_csv ( 'data.csv' , index_col = 0 , parse_dates = [ 'IND_DAY' ]) >>> df . dtypes COUNTRY object Popular float64 Surface area float64 Gross domestic product float64 CONT object IND_DAY datetime64[ns] dtype: object The columns with the floating-point numbers are 64-bit floats. Each number of this type float64 consumes 64 bits or 8 bytes. Each column has 20 numbers and requires 160 bytes. You can verify this with .memory_usage():

>>>